- Search

- 1.877.235.1004

- Contact Us

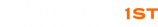

Smart Audio Awareness Glasses

Machine Learning-Powered Wearable for Real-Time Audio Direction Detection

Product Design Requirements

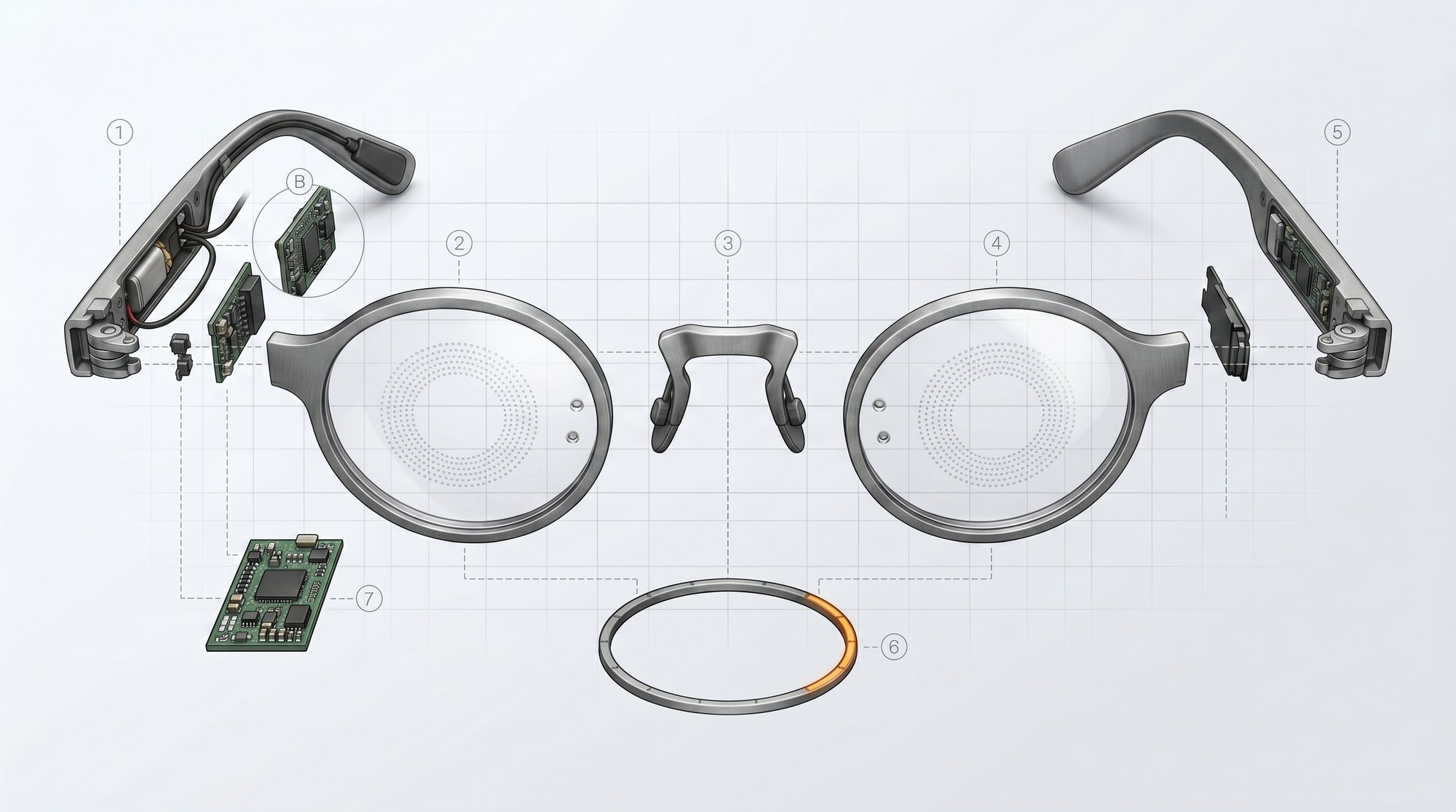

David Thorn, Founder and President of Purple Dragon LLC, engaged Design 1st to prove out a wearable audio direction-finding device built into eyeglasses. The concept, as a US Patent, placed microphones in the frame rims and an LED ring on the rear inner surface of each rim, sitting in the wearer’s peripheral vision.

When sound arrives, the LEDs light in its direction, giving people with hearing loss a visual channel for spatial awareness. Nothing off the shelf could do this. D1 was brought in to research viable methods and build a technology demonstrator from the ground up.

- Build a proof-of-concept multi-microphone device for audio source detection, volume measurement, and frequency analysis

- Design a 6-microphone circular array optimized for spatial audio capture with matched sensitivity, SNR, gain, and phase response

- Develop an ML-based prediction system that adapts to varying acoustic environments, from quiet rooms to noisy settings

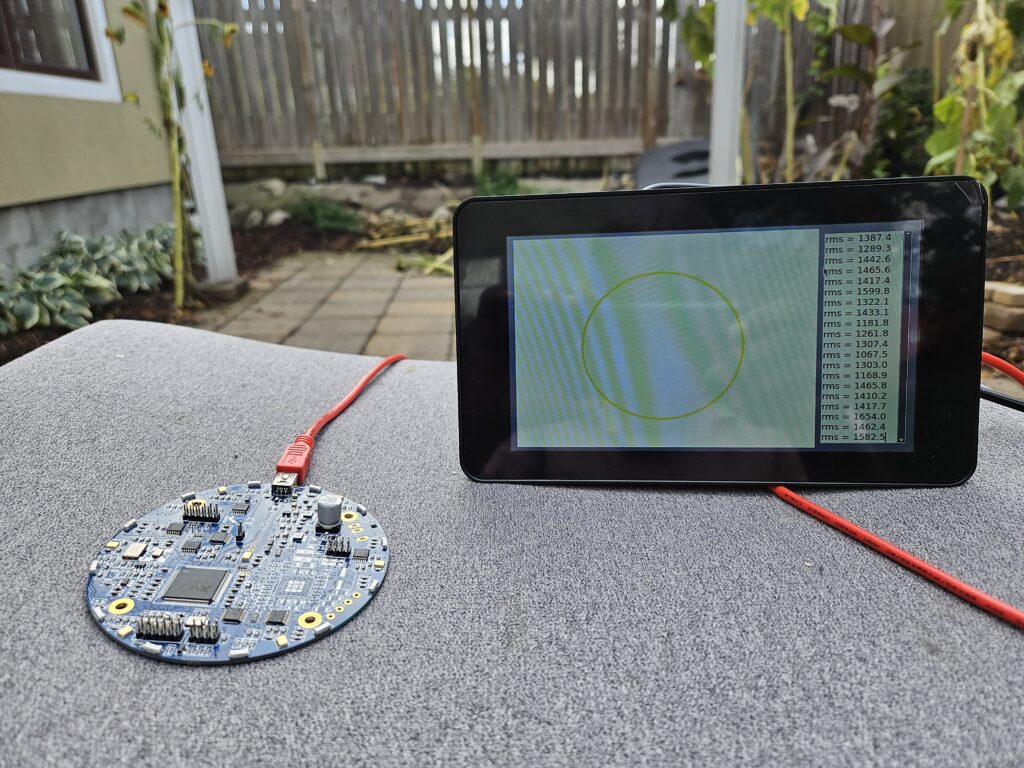

- Deliver a standalone demo system running real-time inference on embedded hardware

- Primary application: assistive technology for hearing-impaired users. Secondary: alerting workers in high-noise construction and industrial environments

The Electronics Engineering Challenges

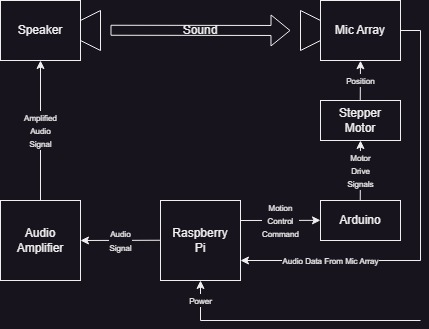

Traditional analytical methods for audio source direction detection rely on complex mathematical models and perform poorly in reverberant, real-world environments. Design 1st’s electronics team selected a machine learning approach for its potential to adapt to diverse acoustic conditions without manual parameter tuning.

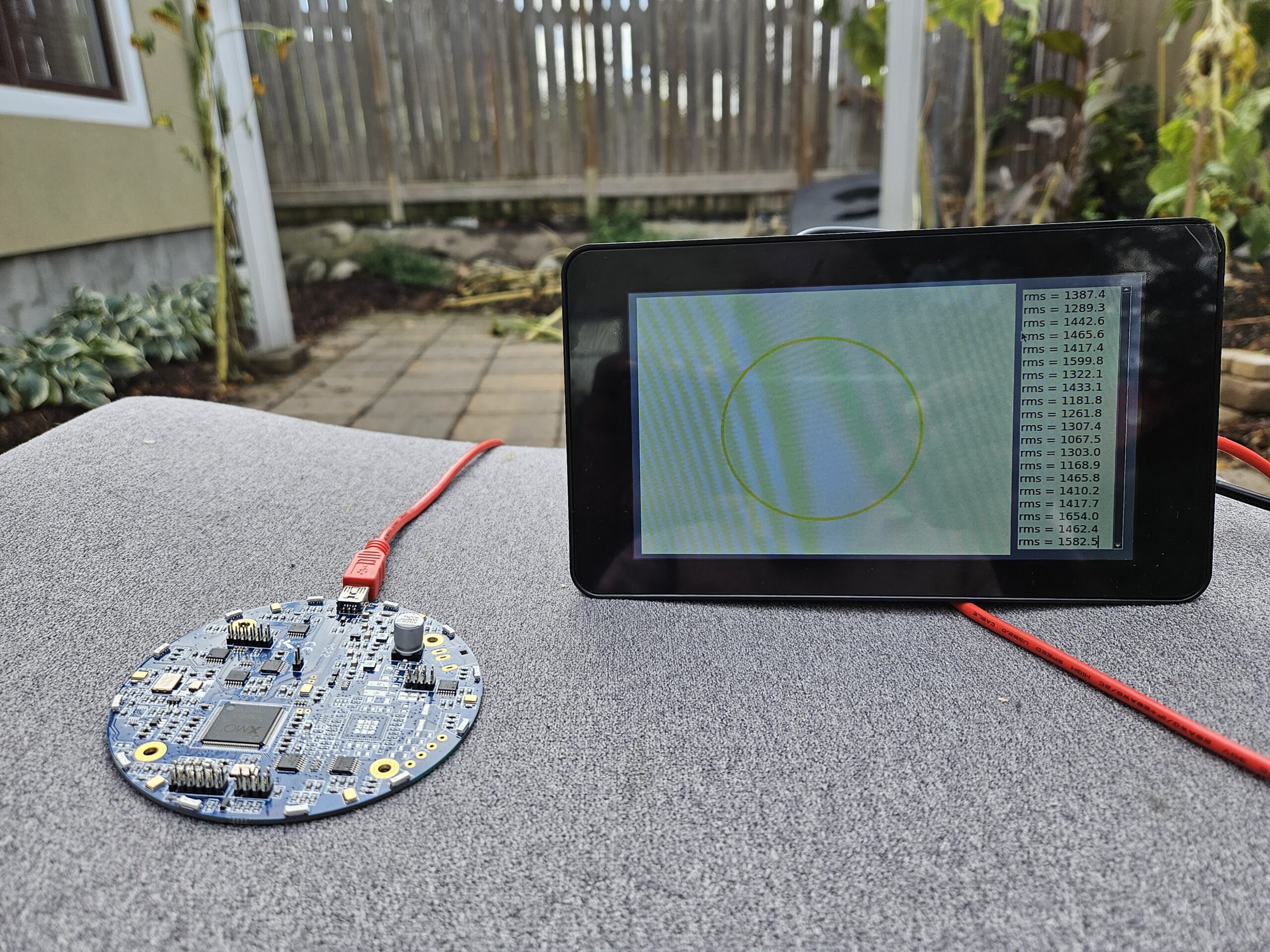

- Characterized and optimized the microphone array geometry (placement spacing, matched sensitivity, phase response) to capture the interchannel differences needed for direction-of-arrival estimation

- Developed multi-channel audio capture circuitry that digitizes simultaneous audio streams at sufficient resolution for ML feature extraction

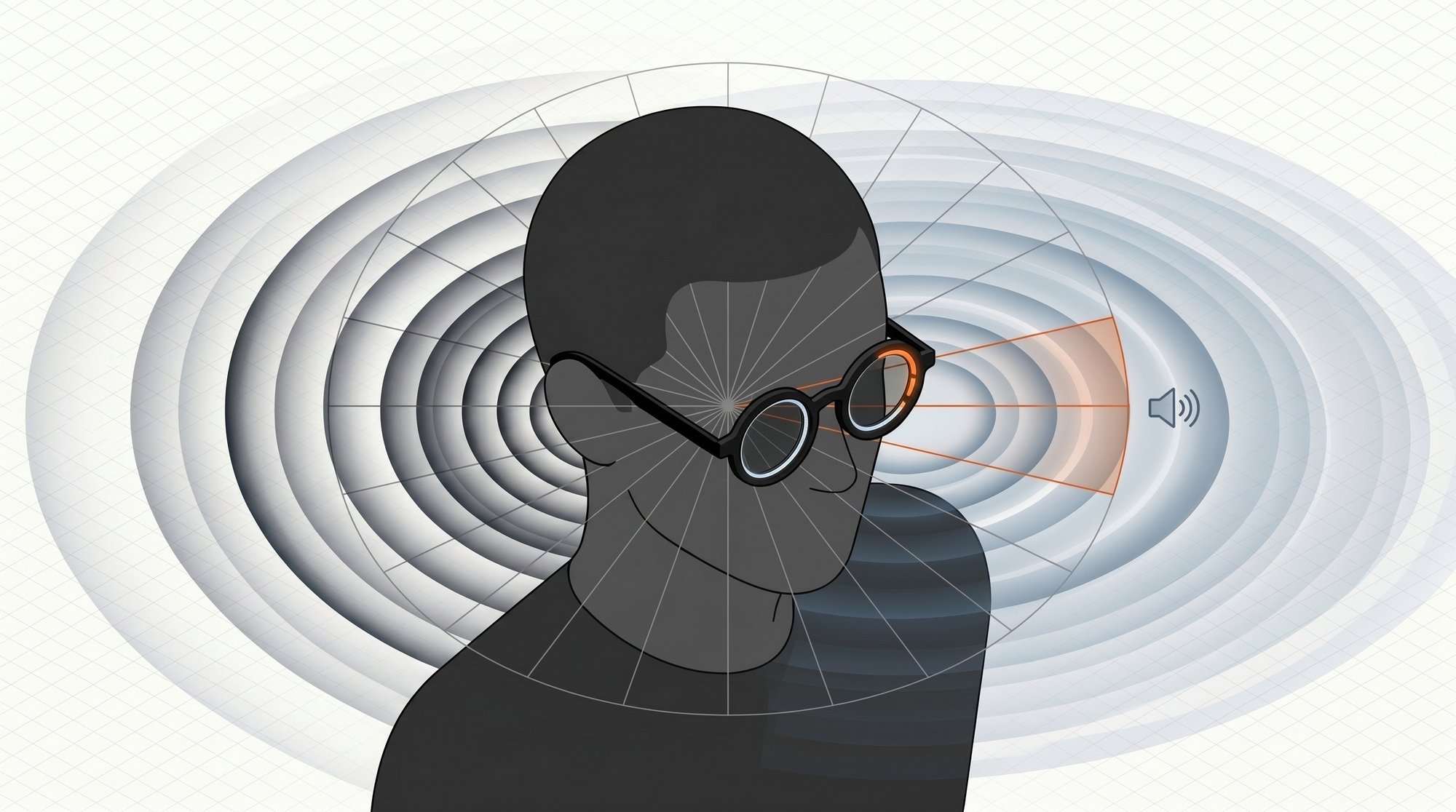

- Evaluated off-the-shelf vs. custom board architectures and built an embedded demo platform for rapid iteration

- Built automated data-capture tooling to rotate the array through full 360° sweeps at controlled angles, generating hundreds of training samples per session

The Machine Learning & Software Challenges

Traditional analytical methods for audio source detection (like GCC-PHAT) often struggle in reverberant, real-world environments. Our team selected a machine learning approach for its ability to generalize across diverse acoustic conditions without manual parameter tuning.

- Constructed a custom sound isolation test chamber with acoustic treatment, supplementing outdoor and indoor recording sessions across varied acoustic profiles

- Trained deep learning models to correlate interchannel audio differences with angle of incidence, regardless of audio content or ambient conditions

- Used acoustic simulation software to generate synthetic training datasets, covering room geometries and noise conditions faster than physical recording alone

- Deployed real-time inference on edge hardware with low-latency detection and threshold-based filtering to reject background noise

Product Results

The project validated that ML-based audio spatial localization is a viable path to a wearable assistive device. A standalone demo system was shipped to the client, where independent testing replicated D1’s directional detection findings, confirming the approach works outside a controlled lab.

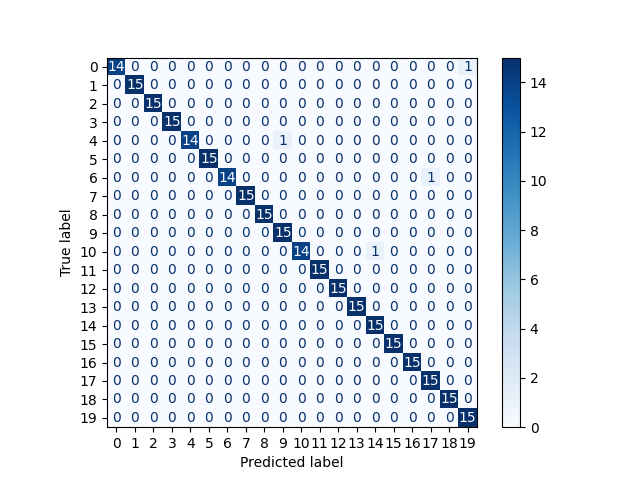

- Direction classification accuracy above 95%, validated across multiple independent test datasets

- Functional embedded demo unit with real-time audio direction inference and visual feedback, with a recorded demo video of live detection

- D1’s engineering leadership confirmed a purpose-built product can meet the size and power constraints of a head-mounted assistive device

- Project advanced from R&D into early Detailed Engineering, laying the groundwork for a production-ready product